|

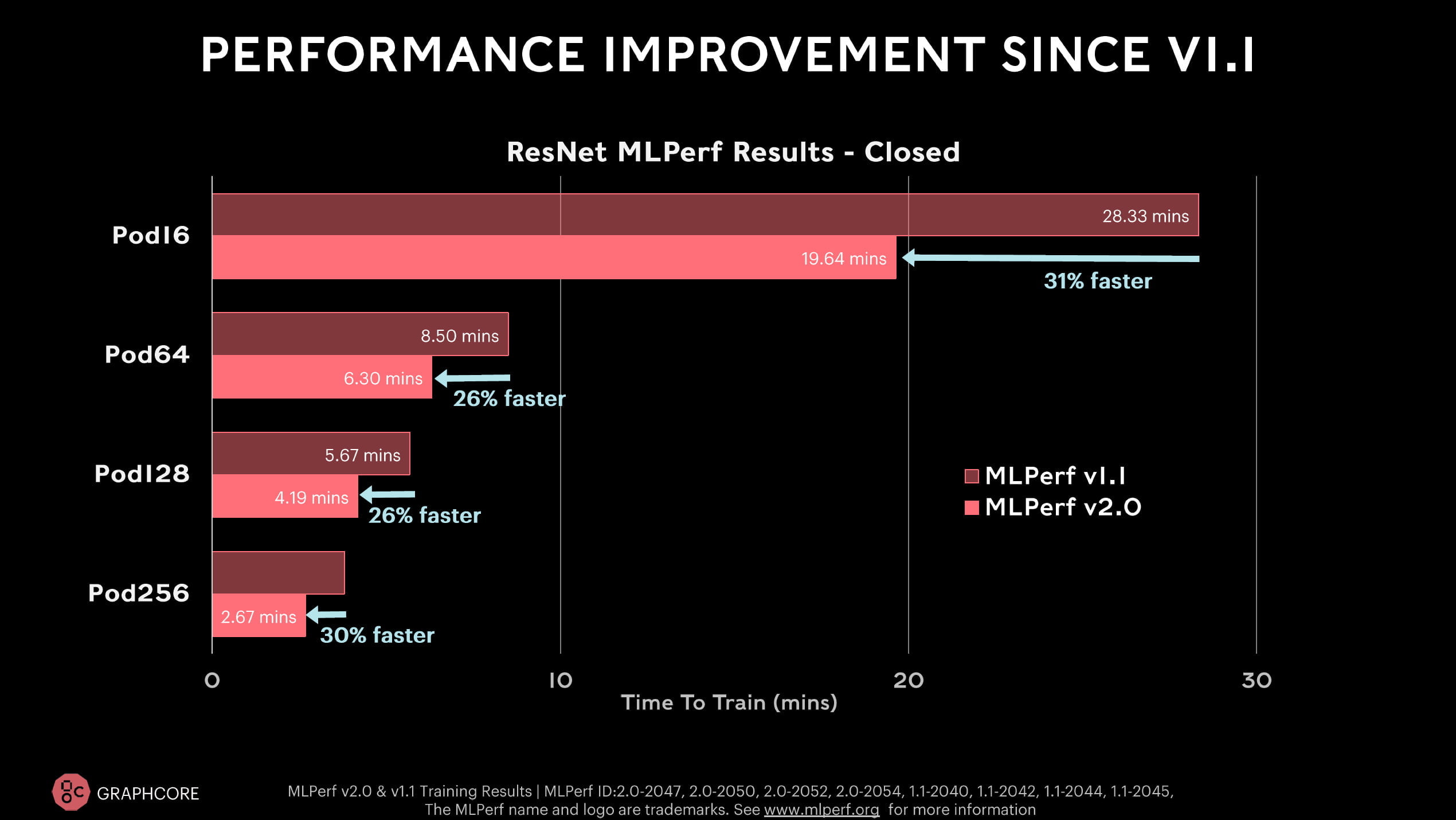

The participation in the MLPerf benchmark should therefore primarily show that Graphcore's IPU can deliver comparable performance. In a press briefing on the MLPerf results, Graphcore points out the different architecture of its products: Nvidia, Google and Intel produce similar vector processors, whereas Graphcore's IPU is a graph processor. Graphcore says that its systems can keep up with Nvidia's performance and price - at least in the benchmark tasks.| Image: Graphcore Graphcore does not want to compete directly with Nvidia Baidu participates for the first time with Graphcore pods.Īccording to Graphcore, the new Bow-Pod16 is clearly ahead of Nvidia's DGX-A100 server in the ResNet-50 benchmark and offers competitive prices. For Graphcore, this is a sign that its chips can also achieve good results in other frameworks. The values achieved in training are on par with Graphcore's submissions in the internal PopART framework. Baidu submits BERT values for a Bow-Pod16 and a Bow-Pod64 and uses the AI framework PaddlePaddle, which is widely used in China. | Image: Graphcoreįor the first time, an external company with a Graphcore system also participates in the benchmark. Graphcore improves BERT performance with Bow IPU and software improvements. With the better hardware and software improvements, Graphcore achieves a 26 to 31 percent faster training time in the ResNet-50 benchmark, and in the BERT benchmark it is 36 to 37 percent on average - depending on the system. Graphcore shows performance leap and willingness to cooperateīritish chipmaker Graphcore is entering the race for the first time with the new BOW IPU. This, it says, is important for training large AI models such as GPT-3 or Megatron Turing NLG. Nvidia also highlights being the only company able to show real-world performance in supercomputer configurations. Therefore, AI hardware diversity - the ability to run any model in MLPerf and beyond - and high performance are critical for real-world AI product development.

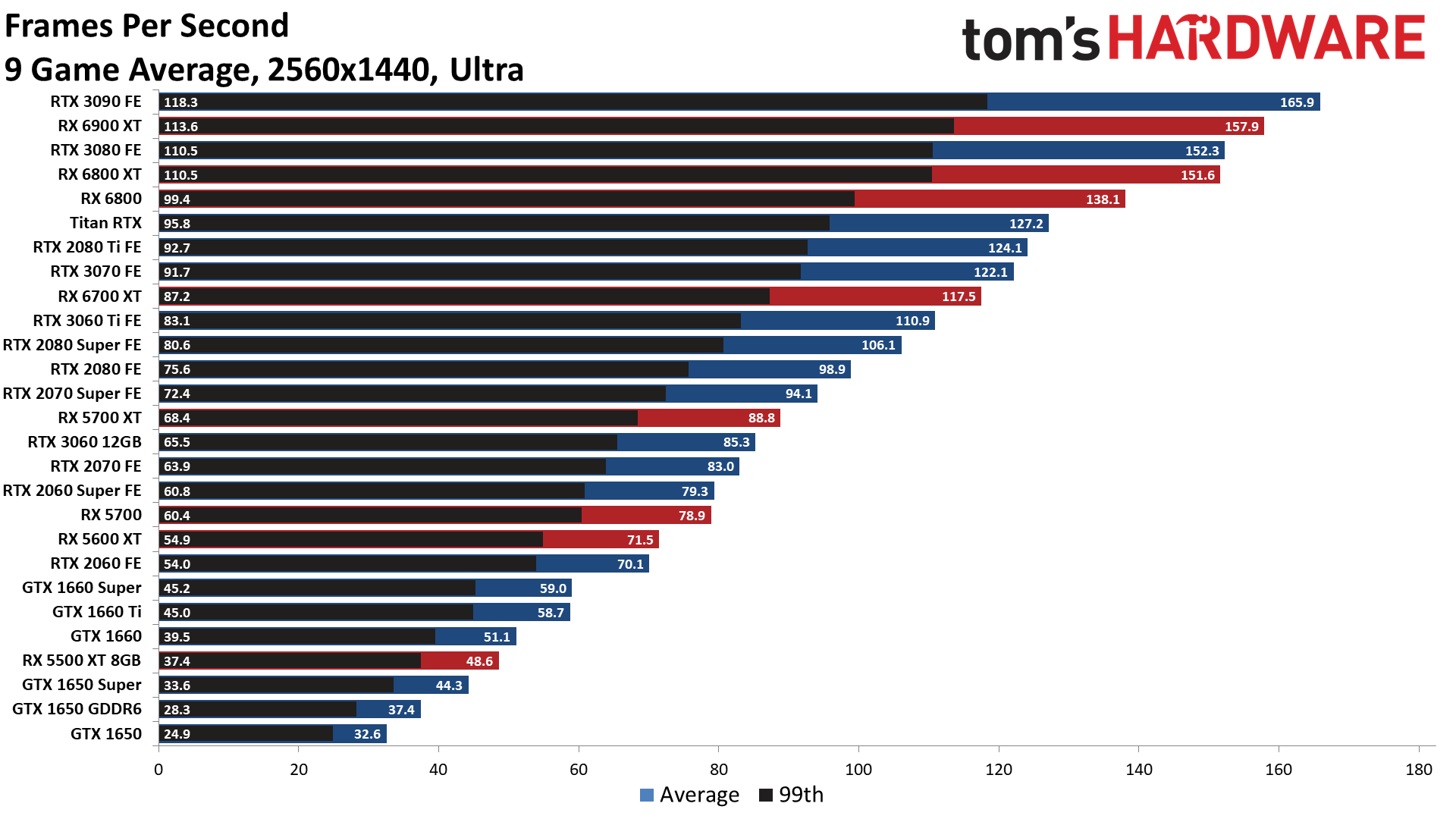

Nvidia sees one of the biggest advantages of its platform in its versatility: Even relatively simple AI applications, such as asking questions about an image via voice input, require multiple AI models.ĭevelopers need to be able to design, train, use, and optimize these models quickly and flexibly. Since the first tests began in 2018, Nvidia's AI platform has increased training performance by a factor of 23 thanks to the jump from V100 to A100 as well as numerous software improvements, the company says. Nvidia sees itself further ahead in per-chip performance. | Image: NvidiaĪccording to Nvidia, the A100 also retains its leading position in a performance-per-chip comparison and is the fastest in six of the eight tests. Nvidia participates in all benchmarks and can score with its supercomputer architecture. Google only participates in the RetinaNet and Mask R-CNN benchmarks, Graphcore and Habana Labs only in the BERT and RestNet-50 benchmarks.

The remaining three participants are Google's TPUv4, Graphcore's new BOW IPU, and Intel Habana Labs' Gaudi 2 chip.Īll Nvidia systems rely on the two-year-old Nvidia A100 Tensor Core GPU in the 80-gigabyte variant and participate in all eight training benchmarks in the closed competition. Nvidia's systems dominate this year's benchmark as well: of all the submissions in the benchmark, 90 percent are built on Nvidia's AI hardware. MLPerf Training 2.0: Nvidia owns 90 percent And, most recently, we launched Poplar v1.4, including full PyTorch support, ensuring that the most widely used frameworks are now simple to implement on the IPU and yield extremely impressive performance results.Check your inbox or spam folder to confirm your subscription. This impressive global network includes some of the world’s most respected distributors, resellers and OEMs. “Our first published benchmarks for the new systems show them clearly dominating the latest GPU-based setups,” the company said. “We also launched the Graphcore Partner Program. “We have created a technology that dramatically outperforms legacy processors such as GPUs, a powerful set of software tools that are tailored to the needs of AI developers, and a global sales operation that is bringing our products to market.”Ģ020 for Graphcore included the launch of our Mk2 IPU processor, the GC200 and data centre compute systems, the IPU-M2000 and IPU-POD 64 for scale-out.

“The confidence that they have in us comes from the competence we have demonstrated building our products and our business,” the company said.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed